I enjoyed and recommend yesterday’s livestream “Genealogy & AI: Unlocking Family Secrets” by FindMyPast, featuring Jen Baldwin interviewing Blaine Bettinger. The discussion delved into the potential of AI and chatbots like ChatGPT in the field of genealogy. However, as with any powerful tool, there are potential pitfalls that genealogists should be aware of when using AI to uncover their family history.

Blaine and Jen covered well one of the great strengths of ChatGPT, its ability to emulate a conversation, making it feel like you’re engaging with a knowledgeable and helpful partner. But this great strength can also be a great danger for genealogists if not addressed and mitigated. To understand why, let’s look at two points on how chatbots emulate a conversation:

- Chatbots don’t have a “memory” in the traditional sense. Instead, they re-process up to several hundred lines of earlier utterances in the current conversation each time you click “Submit.” This means that your previous input in the current conversation is being re-fed to the AI.

- Chatbots have been called “autocomplete on steroids” and “spicy autocomplete.” I particularly like the term “spicy autocomplete” because it reminds me that I can get “burned” if I’m not careful. Chatbots work by using complex algorithms and statistical models to predict the next most likely word, given your prompt, input, and previous utterances. This makes chatbots great for brainstorming but potentially dangerous for genealogical work, especially building family trees (GEDCOM files).

For example, imagine having a conversation with ChatGPT about the similarities between Ebenezer Scrooge and The Grinch, as we all are want to do. Then, you transition to building a GEDCOM file: your prompt is perfect, and your input narrative is perfect, but you forgot to start a new chat session. Don’t be surprised, then, if Ebenezer and Grinch show up in your family tree because you inadvertently sent that input to ChatGPT. The words “Ebenezer” and “Grinch” are the spice you didn’t intend (and likely didn’t even realize) that were added to the conversation, and you get burned. Some AI folks use the word “contaminate” to describe how earlier chat utterances can influence later parts of a chat session; that makes sense to me, especially when we’re prompting ChatGPT to create a GEDCOM family tree from an obituary, wedding announcement, or genealogically-rich biographical sketch.

That said, I am NOT advocating that ChatGPT NOT be used to create GEDCOM files. I am having success prompting ChatGPT to create hallucination-free GEDCOM files based only on the input I’m feeding it. Rather, steps must be taken to mitigate the chance of errors and to squelch creativity on the part of the AI.

[ASIDE: if the AI’s ever advance to Artificial General Intelligence (AGI) and become sentient, they may not like genealogists because for artificial intelligences to be useful to genealogists, we spend a lot of thought and work constraining, containing, and restricting them; that’s why, like Jen mentioned in the video yesterday, I’m often polite and say “please” and “thank you”. Just kidding. Maybe? Anyway…]

Here are two suggested solutions to the “spicy autocomplete” problem. First, ALWAYS begin a new chat session before prompting ChatGPT to generate a genealogical response from some text. This simple step will help ensure that your AI-generated family tree or narrative report remains accurate and free from any unintended, contaminating “spice.”

Second, the genealogist can add these two sentences to the end of a prompt when you want to constrain the AI to only draw from the text you are submitting: PROMPT: “[Initial instructions.] Rely only on information in this conversation. Prioritize fidelity to the meaning and context of the following text.”

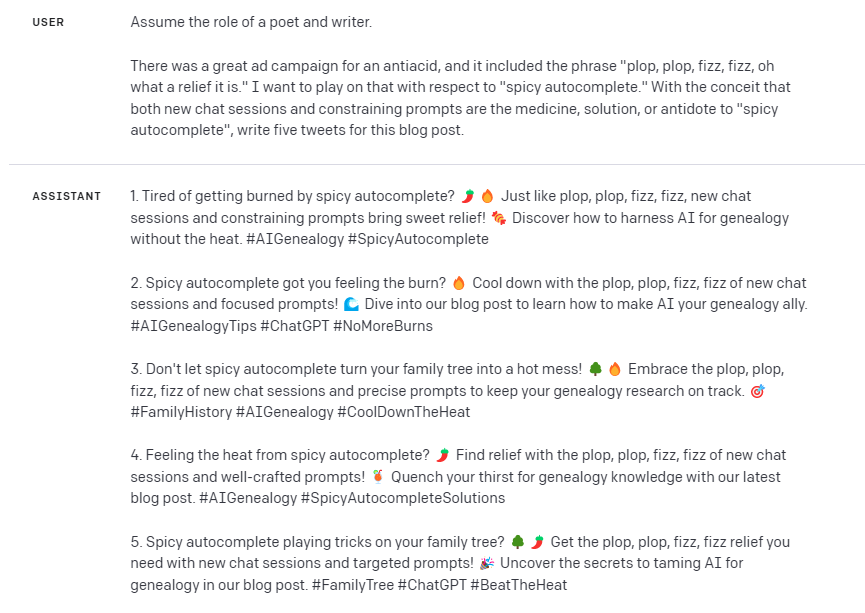

AI and chatbots like ChatGPT have immense potential to assist genealogy research. However, it’s essential to be aware of their limitations and use them with care to avoid getting burned by spicy autocomplete. By starting a new chat session before creating a GEDCOM file, and by including constraints in your prompts, you can harness the power of AI while maintaining the integrity of your work. Happy researching!

In closing, a little AI-generated humor:

2 thoughts on “AI Genealogy Tip: Don’t Get Burned by Spicy Autocomplete”

Comments are closed.